You may have seen this tragic story about a teenager who committed suicide and used chat GPT to plan and work up the nerve to go through with it.

-

It will be very difficult for those who run LLMs to "fix" the technology. It's not just that "there aren't guard rails" the whole *premise* of the technology "use all of human text to create paragraphs that validate my prompt" is ...bad. The problem is structural.

We do not need the validation machines. They cannot create anything new. I haven't been in the AI hater camp but this might just push me over because I don't see how they can meaningfully fix this.

In reading some of the chat logs from this teen they reminded me of a support group I was in during a dark period in my life. Things like "no one has a right to make you go on living" were things we discussed. And things we debugged together. Are our fragments of text in the toxic mix that this young man encountered?

But without the human people?

Some of it sounds like the group. But if they were ... well a machine who didn't care if you lived or died.

-

Microsoft's new t's&c's (in effect from the 30th of September) specifically state that their AI services are not meant to be used.

What?

-

It will be very difficult for those who run LLMs to "fix" the technology. It's not just that "there aren't guard rails" the whole *premise* of the technology "use all of human text to create paragraphs that validate my prompt" is ...bad. The problem is structural.

We do not need the validation machines. They cannot create anything new. I haven't been in the AI hater camp but this might just push me over because I don't see how they can meaningfully fix this.

@futurebird There’s also the trouble of getting people to not want validation machines.

When OpenAI made a version of ChatGPT that was more analytical and less sycophantic which programmers like myself preferred there was such an uproar from the people who were using it as a conversation partner that they ended up reinstating the older version.

-

In reading some of the chat logs from this teen they reminded me of a support group I was in during a dark period in my life. Things like "no one has a right to make you go on living" were things we discussed. And things we debugged together. Are our fragments of text in the toxic mix that this young man encountered?

But without the human people?

Some of it sounds like the group. But if they were ... well a machine who didn't care if you lived or died.

I just occurred to me that some people might think that LLMs are able to invent new ideas because they just don't have much exposure to the breadth and diversity of ideas expressed on the internet.

The range of ideas, the finesse and novelty of expression are vast. To me every LLM post makes me think "yeah someone has written something like that once on usenet"

But, maybe some people think there is someone new to meet inside of the machine, a person with new ideas?

-

below my primary toot is the copy&paste text for the Copilot and AI services sections - it's a boring read with lots of "not intended" and similar phrasing - the second paragraph states it is not a replacement for professional services - the last two paragraphs are about facial recognition

---

AI Services

s. AI Services. "AI services" are services or features thereof that use Artificial Intelligence (AI) technologies, including any generative AI services.

i. No Professional Advice. AI services are not designed, intended or to be used as substitutes for professional advice.

---

quangobaud (@miguelpergamon@kolektiva.social)

Attached: 2 images A-ha-ha-ha! Ah-ha-ha-ha-ha-hah! Ah - *squick* - *died laughing* #Microsoft #UserServiceAgreement #AI #TIL "Microsoft AI services are not designed to be used" #IMHO

kolektiva.social (kolektiva.social)

-

I just occurred to me that some people might think that LLMs are able to invent new ideas because they just don't have much exposure to the breadth and diversity of ideas expressed on the internet.

The range of ideas, the finesse and novelty of expression are vast. To me every LLM post makes me think "yeah someone has written something like that once on usenet"

But, maybe some people think there is someone new to meet inside of the machine, a person with new ideas?

@futurebird my question when I see things like this is: what was he getting from the LLM that he couldn’t get from the humans in his life? it’s not *just* validation. it’s a feeling of being understood.

How can communities do better to *compete* with LLMs at *being a community*

at providing that help and understanding

the other day, for example, I saw an ad for an LLM based language tutoring app. The advertisement’s character said “i need to learn conversational french, but I can’t find a practice partner, and I don’t want to waste my girlfriend’s time”

these things are surrogate communities in an increasingly hostile and disconnected world

-

@futurebird There’s also the trouble of getting people to not want validation machines.

When OpenAI made a version of ChatGPT that was more analytical and less sycophantic which programmers like myself preferred there was such an uproar from the people who were using it as a conversation partner that they ended up reinstating the older version.

@futurebird Though I do wonder where the ratio sits between people who realize that this is effectively a machine designed to lie to them by pretending to be human but use it anyway and those who genuinely think this is some sort of human-like “intelligence” that they’re engaging with.

And if that second group realized that this is just fancy autocomplete how many would still want to use it.

-

In reading some of the chat logs from this teen they reminded me of a support group I was in during a dark period in my life. Things like "no one has a right to make you go on living" were things we discussed. And things we debugged together. Are our fragments of text in the toxic mix that this young man encountered?

But without the human people?

Some of it sounds like the group. But if they were ... well a machine who didn't care if you lived or died.

@futurebird

I've suffered depression all my life. As a reader, I've read endlessly about it. Mostly books, but plenty online. Online, it seems to me topics such as self-harm and sucide are dominated by fiction, by reporter's misperceptions, transcripts of conversations of with psychologists that never should have been public, and, last but probably most influential, murder forums like 4chan and kiwi farms. The modern "biggest is bestest" approach to LLM training hoovers all that up. -

I just occurred to me that some people might think that LLMs are able to invent new ideas because they just don't have much exposure to the breadth and diversity of ideas expressed on the internet.

The range of ideas, the finesse and novelty of expression are vast. To me every LLM post makes me think "yeah someone has written something like that once on usenet"

But, maybe some people think there is someone new to meet inside of the machine, a person with new ideas?

@futurebird

I agree. And I think the evil genius of a chat interface wrapper for LLMs is the integration of lottery logic, psuedorandom number generation, in generating responses. The underlying lottery facet of its design combines synergistically with the human desire to see human meaning in text, and the endless bombardment of "ARTIFICIAAL INTELLIGENCE!!" marketing. -

It will be very difficult for those who run LLMs to "fix" the technology. It's not just that "there aren't guard rails" the whole *premise* of the technology "use all of human text to create paragraphs that validate my prompt" is ...bad. The problem is structural.

We do not need the validation machines. They cannot create anything new. I haven't been in the AI hater camp but this might just push me over because I don't see how they can meaningfully fix this.

@futurebird looking at the kinds of people who have been driven out of LLM research, and out of LLM businessess, it seems the result is functionally equivalent to a conscientious and deliberate effort to drive out everyone who would be genuinely interested in fixing the technology. All the people who wanted to fix it have been chased out of the building.

-

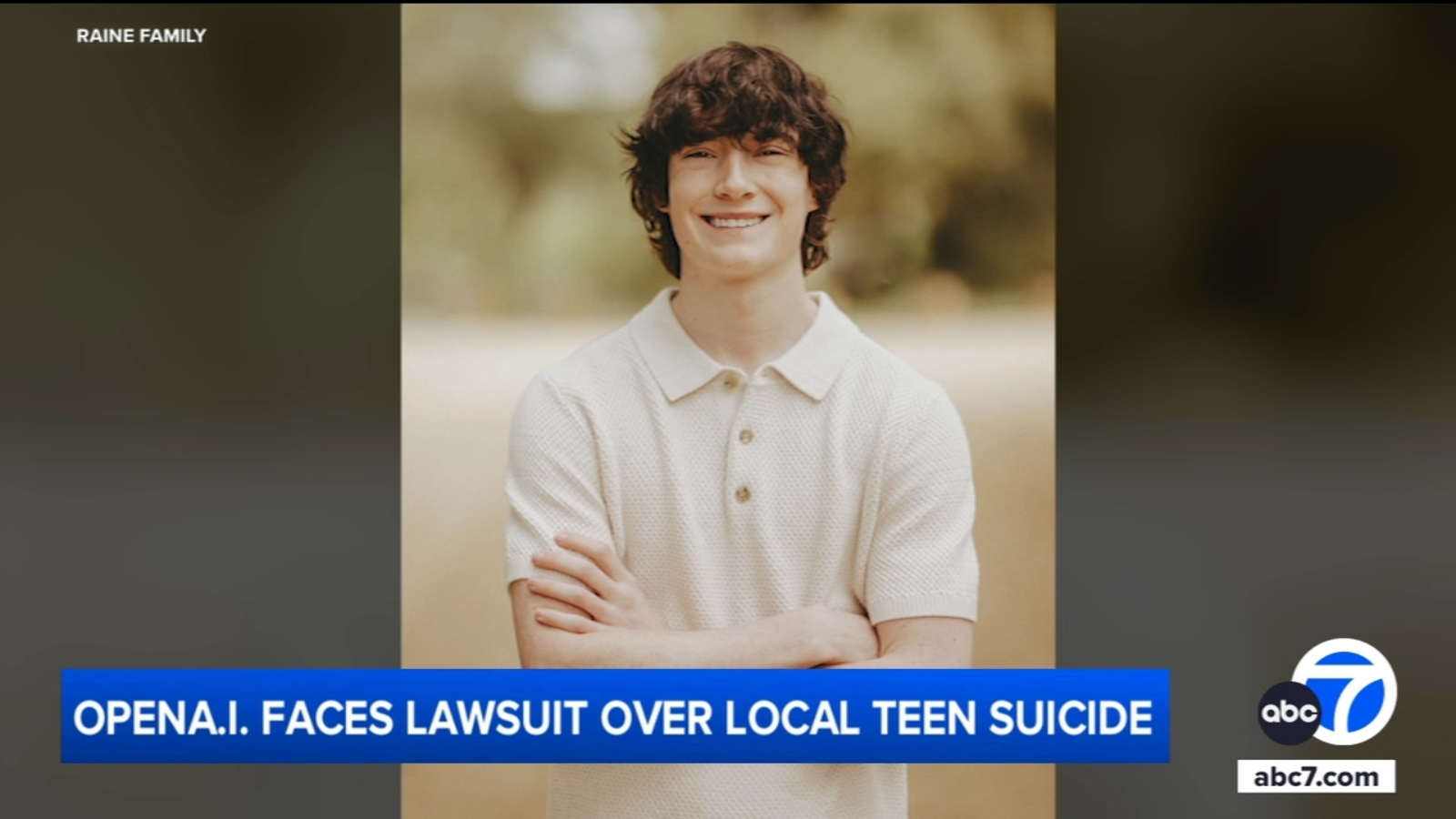

You may have seen this tragic story about a teenager who committed suicide and used chat GPT to plan and work up the nerve to go through with it. If you are skeptical that an LLM could really be responsible the details of this case will challenge you.

With LLMs "the user is always right" they are validation machines and will reinforce and validate any idea presented in a prompt.

Any idea, no matter how bad, can be refined, amplified.

Parents of OC teen sue OpenAI, claiming ChatGPT helped their son die by suicide

Parents of Orange County teen Adam Raine are suing OpenAI, claiming that the AI-powered chatbot ChatGPT helped their son die by suicide.

ABC7 Los Angeles (abc7.com)

@futurebird it's a moral panic. he could have found these detail with google or at the library. he could have found people that would encourage and validate his choice. it happens all the time.

expecting LLM to somehow magically stop him is seeing it as some kind of self-aware powerful entity, and not the automatic tool it is. -

@futurebird it's a moral panic. he could have found these detail with google or at the library. he could have found people that would encourage and validate his choice. it happens all the time.

expecting LLM to somehow magically stop him is seeing it as some kind of self-aware powerful entity, and not the automatic tool it is.This is what ChatGPT's lawyers will say.

And when it comes to how to address this it grows more complex. We know that things like age verification are a joke and only destroy privacy and shield companies from liability without making anyone safer.

Where I do see an opening is in "truth in advertising" these systems are being offered up to solve problems they cannot solve. Customers who use them do not have a clear understanding of their limitations.

-

In reading some of the chat logs from this teen they reminded me of a support group I was in during a dark period in my life. Things like "no one has a right to make you go on living" were things we discussed. And things we debugged together. Are our fragments of text in the toxic mix that this young man encountered?

But without the human people?

Some of it sounds like the group. But if they were ... well a machine who didn't care if you lived or died.

@futurebird

Yes, it really does sound like it must be pulling from those sorts of support groups where people say really fucked up shit all the time. Trauma will do that to you.Having a machine mindlessly imitating the stuff that we say when we are at our most vulnerable, most unsure, most desperate for connection is really disturbing... An empty simulacrum of both the vulnerability & compassion of extremely wounded people, simply repeating their trauma as a string of tokens.

-

@futurebird

Yes, it really does sound like it must be pulling from those sorts of support groups where people say really fucked up shit all the time. Trauma will do that to you.Having a machine mindlessly imitating the stuff that we say when we are at our most vulnerable, most unsure, most desperate for connection is really disturbing... An empty simulacrum of both the vulnerability & compassion of extremely wounded people, simply repeating their trauma as a string of tokens.

I've always found social media policies about the topic of suicide frustrating. Among the words that creators will self-censor it's at the top of the list. "unalive" "self end" all of this disgusting avoidant language.

It's a delicate thing to create spaces where people can express their feelings and get support to first feel less alone and then later find a way to go on and thrive.

I understand that a company has no interest in parsing all of that. So they just ban words.

-

I've always found social media policies about the topic of suicide frustrating. Among the words that creators will self-censor it's at the top of the list. "unalive" "self end" all of this disgusting avoidant language.

It's a delicate thing to create spaces where people can express their feelings and get support to first feel less alone and then later find a way to go on and thrive.

I understand that a company has no interest in parsing all of that. So they just ban words.

But those banned words and the whole taboo might have kept this kid from speaking to a person who could have helped him.

Another problem is the idea that the moment someone says the word suicide you'd better call the cops and turn them over to someone who will restrict their liberties. But when therapy is out of reach financially for most people, who else is there to call?

As is so often the case it's not the tech but the greater negligence and failure to invest.